The risks of Delayed Durability

3April 12, 2016 by Kenneth Fisher

It’s T-SQL Tuesday again and this month we are hosted by Jens Vestergaard (b/t) who want’s us to talk about our favorite SQL Server feature. Great topic, but a really difficult choice. There are so many great features of SQL Server.

It’s T-SQL Tuesday again and this month we are hosted by Jens Vestergaard (b/t) who want’s us to talk about our favorite SQL Server feature. Great topic, but a really difficult choice. There are so many great features of SQL Server.

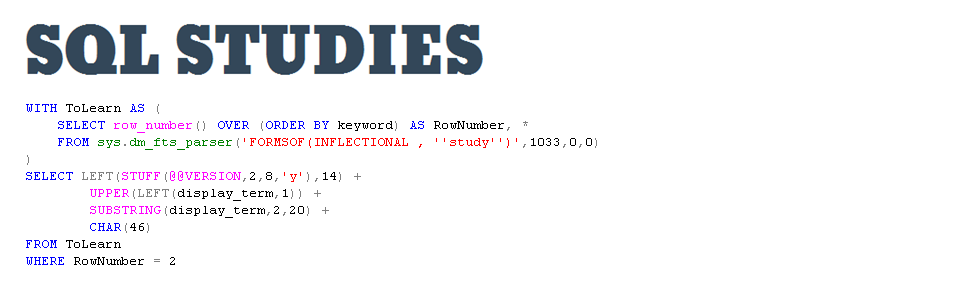

Some of my favorites include:

- String manipulation functions

- The optimizer

- CTEs

- Delayed Durability

- SSIS

- Any number of DMOs

- Data collector

In the end I decided it would be fun to post about one of the newer features; Delayed Durability. To understand DD (delayed durability) you have to understand how SQL Server implements durability. To start with there are two pieces of any data change. The actual data page and any log records that are created. In order to keep SQL Server as fast as possible the data pages are changed in memory and then written to disk as part of a background process. Unfortunately this means that you could lose those changes if the server crashes or shuts down before they are written to disk. In order to avoid this, and keep the changes durable, the transaction can not complete until the log information is actually written to disk. This means that one of the big bottlenecks in any given transaction is writing that log record to disk. The DD option basically changes that write from a synchronous to an asynchronous operation. The log writes are bundled together in 60k chunks before being written to disk. This can (depending on your work load) provide a huge increase in speed. I’m not going to bother discussing that part of it since Aaron Bertrand (b/t) and Melissa Connors (b/t) wrote great posts on just that (Aaron’s and Melissa’s).

What I want to discuss is the actual risk of data loss.

Spoiler: I think the risk can be minimized, but that still doesn’t mean you should turn DD on unless you have a) tested it, b) it actually does provide a performance improvement for you, and most importantly c) you can accept the risk of data loss regardless of how large.

The way I see it there are three major categories. You could be in SIMPLE recovery model, FULL recovery model, or some form of HA (high availability). Note: Yes, I realize that HA has nothing to do with recovery model (aside from FULL being required) but in this case I see it as an added layer to the discussion.

Before I begin I want to point out that you can manually cause the log to flush by running a fully durable transaction (possible if you are using the ALLOWED setting as opposed to FORCED), or by running the sys.sp_flush_log stored procedure.

Simple Recovery

If your database is set to SIMPLE recovery then you have already decided that some data loss is acceptable. As an example let’s say you are running full backups every night at 2AM (and to minimize the risk you are running sys.sp_flush_log right before the backup as part of the backup job. At that point there is no difference between the server failing at 10am, 2pm, or even 4:37 pm. You still have to recover to the full backup. Now there is still an effect since backups contain the information as of the end of the backup not the beginning. So transactions that completed after the beginning of the backup (when we ran sys.sp_flush_log) and the end but have not been hardened by being written to the log could be lost. Still I would call that a pretty minimal risk.

Full Recovery

You are running in FULL recovery so you are more concerned about data loss. But how much is acceptable? Again to make this simple we will use an example. You’re business (because it’s their decision) has decided that 30 minutes of data loss is acceptable. You’ve decided that you’ll be taking log backups every 15 minutes, and since you have delayed durability turned on you add a job that runs every five minutes that flushes the log to disk. So now our risk of data loss (assuming all of our backups are good) is well within the allowed tolerance. In fact if you have the opportunity to take a tail of log backup then your maximum risk (outside of a failed backup) is 5 minutes.

HA

If you are using a synchronous form of HA then I wouldn’t use delayed durability. Delayed durability is asynchronous by nature and that doesn’t really fit. But if you are already using an asynchronous form of HA then when you do add some risk (I’d still be running the 5 minute job to flush the log though to minimize it though) but all you are doing is adding to the asynchronous part. Still an additional risk though. Unfortunately I’m not really experienced enough with HA to say more than that.

Conclusion

If you’ve decided that DD is going to improve your performance and you can, or already are, able to tolerate some data loss then I would say go for it. A job that flushes the log will minimize that risk (potentially dramatically). Particularly if you are running a light workload and it might be a fair amount of time before you reach that 60kb limit.

You will hear an argument that the data loss could be greater if there was a long running transaction that hadn’t been hardened yet. And while yes, I guess that’s technically true, realistically I don’t feel it’s significant. Assuming that you are running a busy enough system that the log is writing to disk say every 10 minutes, then that long running transaction will be hardened after an additional 10 minutes. So what’s the difference between a crash during that 10 minute period or one a few minutes earlier? And if you are all that worried about it add a log flush to the end of your transaction.

Delayed durability is a risk. No doubt. But it can be minimized. And if you are careful with it, for the right workload, it can make an amazing difference in performance.

[…] The Risk of Delayed Durability […]

If you are using a synchronous form of HA then I wouldn’t use delayed durability,

Here I blv transaction will get more slow as log will not be hardened on log disk on secondary replica if primary log block is not full.

Please correct me.

I’m not sure about the transaction speed but you absolutely don’t want to use delayed durability in combination with a synchronous HA. Based on the AG & Mirroring section of this link: https://docs.microsoft.com/en-us/sql/relational-databases/logs/control-transaction-durability?view=sql-server-2017 it sounds like the transactions on the secondary become delayed as well which in my mind makes them asynchronous.