A quick fix for a full identity column.

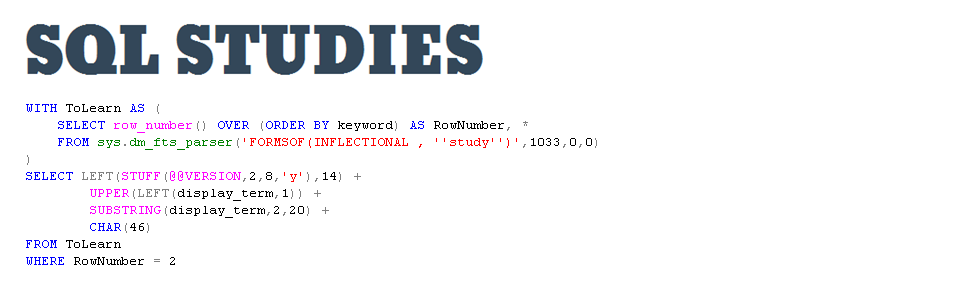

2September 21, 2016 by Kenneth Fisher

A while back I wrote a post on everything I knew about identity columns. In it I mentioned the following:

- Negative increments can be handy if your identity column has reached the max value of the data type. Reseed to 0 (assuming that is where you started) and set your increment to -1.

The idea is that you have a table with an identity column that is your primary key (and probably clustered index). You started it at (1,1) (start at 1 and increment by 1 at a time). Unfortunately you’ve recently discovered that you are about to run out of values. You need a quick fix.

Paul Randal(b/t) recently mentioned in his SQL Skills Insider email that actually the best way to handle it was to go to negative max int (-2,147,483,648) and work your way back up (keeping the increment at 1). That seemed a bit odd to me.

Now one of the reasons people like identity columns as a clustered index is that they are ever increasing. When the current page is full a new one will be created, the row added to it and off we go. On the other hand if you try to insert a value anywhere but the end and the page you try to insert into is full then you have to have a page split. A new page is created, half of the rows are moved to the new page and the row is then written. If it sounds kind of slow that would be because it is. So based on that this is what I expected to happen (I’m using -99 rather than negative max int for the demo)

Well Paul told me this wasn’t the case. Now when Paul tells me something I believe him, but I also like to run tests. So I decided to use sys.fn_PhysLocCracker(%%physloc%%). %%physloc%% returns a varbinary that gives you the location of the row. When passed to sys.fn_PhysLocCracker(%%physloc%%) it returns the database file, page in the file, and slot number where the row can be found. So to start with I create an identity(1,1) and I run 20 inserts, one at a time, checking row locations each time. This is to confirm I’m right about this part.

-- Create the table with identity(1,1)

-- The identity column is the primary key which is

-- the clustered index by default.

CREATE TABLE RowLocationTest (

Id int NOT NULL IDENTITY(1,1)

CONSTRAINT pk_RowLocationTest PRIMARY KEY,

Col1 CHAR(1500));

GO

INSERT INTO RowLocationTest VALUES (REPLICATE('a',1000));

GO

SELECT Id, file_id, page_id, slot_id

FROM RowLocationTest

CROSS APPLY sys.fn_PhysLocCracker(%%physloc%%)

ORDER BY file_id desc, page_id desc, slot_id desc;

GO

So this part was no problem. It worked just like I expected. Now we change the seed value to -100 with the next value of -99.

DBCC CHECKIDENT (RowLocationTest,RESEED,-100); GO

And then run inserts and check them.

INSERT INTO RowLocationTest VALUES (REPLICATE('a',1000));

GO

SELECT Id, file_id, page_id, slot_id

FROM RowLocationTest

CROSS APPLY sys.fn_PhysLocCracker(%%physloc%%)

ORDER BY file_id desc, page_id desc, slot_id desc;

GO

Well, I know this will come as a surprise but Paul and the people who designed SQL know better than me. What actually happens is you get two page splits and right after the second you get a new page. Then from that point on there are no more page splits, just new pages.

As for my original idea, on further research it looks like you can’t modify the increment without creating a whole new table and moving the data. Since we are trying to do this to fix a large table that doesn’t sound like a terribly useful option.

Good one Kennath !!!

Thanks!