Conversion Precedence

1September 20, 2022 by Kenneth Fisher

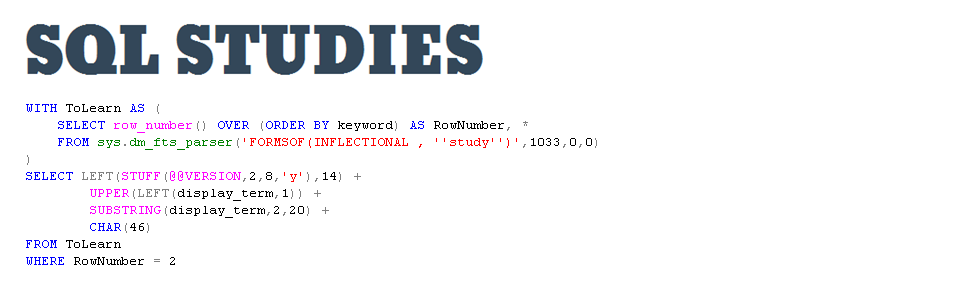

Recently I talked about the difference between implicit and explicit datatype conversions. In it I ran this code:

DECLARE @IntA INT = 1, @IntB INT = 2;

SELECT 1.0*@IntA/@IntB;

With the expectation that you would get back a decimal. Did you wonder why it converted the integers to decimals rather than the other way around? If not you probably should have. I’ll give you a hint. It’s not because it’s the first value in the equation, although that does make a difference.

Thought about it? In case you didn’t figure it out T-SQL (and again, I’m going to say all programing languages where implicit conversions are allowed) has a conversion precedence order. In other words in any calculation where an implicit conversion is required the datatype with a value higher on the list is converted to the datatype with the lower value on the list. So for example:

- date

- time

- float

- real

- decimal

- money

- smallmoney

- bigint

- int

- smallint

- tinyint

A tinyint will be converted to any of the other types of int datatypes and of course int will be converted to decimal. Will time will be converted to date? No, because that particular implicit conversion isn’t allowed. This list only applies to implicit conversions that are possible.

You probably don’t need to memorize the full list (found in the link above) but you should probably know the more common conversions.

Now, where I said above that the decimal being first in the equation does matter. Why does it matter? The implicit conversion happens with the order of operations. 1.0*@IntA is 1.0 then divide that by @IntB gives you 0.5. If you reverse it and do @IntA/@IntB*1.0 then you get @IntA/@IntB is 0 then multiply by 1.0 is 0.0. Not hard on a simple calculation but if you have something more complex you might need to think it through a bit.

Last but not least I want to point out a few outliers.

- user-defined data types (highest)

- sql_variant

- …..

- binary

Nothing will be implicitly converted to binary. It’s last on the list. sql_varient is weird and I’ll handle it in it’s own post. And first but not least, user-defined data types took me a minute to understand. If you are just aliasing a default data type it just uses the default data type. Like this:

CREATE TYPE IntPrecedence FROM int NOT NULL;

GO

DECLARE @Test IntPrecedence = 3;

PRINT @Test/2.0;

This still returns 1.5. However if you if you create a true user defined data type using CLR then it will probably act like it is truly the top of the list. Unfortunately I don’t have the skill to create a datatype like this, and I couldn’t find an example to share either. So I’m just making an assumption but let’s call it an educated guess.

[…] Kenneth Fisher sorts out data types: […]